Computational Auditory Perception with Dallinger

- Project Type:

- Education

- Client:

- Max Planck Institute for Empirical Aesthetics

- Location:

- Frankfurt, Germany

- Technology:

- Python

Enhancing the Dallinger platform to make experiments easier to run and analyze

I cannot recommend the Jazkarta team strongly enough: they are incredibly professional, creative, hard working, and fervently committed to providing the very best service and in-depth solutions to software problems. We have been working with them for over a year and have been extremely pleased with their attentiveness to our needs, as well as their fluency with the newest trends in software development. They also provide truly beautiful code. Outstanding!

Dallinger is a tool to automate social science experiments using combinations of automated bots and human subjects recruited on platforms like Mechanical Turk. It fully automates the process of recruiting participants, obtaining informed consent, arranging participants into a network, running the web-based experiment, coordinating communication, recording and managing the data, and paying the participants. Jazkarta's previous work on Dallinger took it from an early prototype stage to a massively scalable platform.

The Research Group in Computational Auditory Perception at the Max Planck Institute for Empirical Aesthetics was an early adopter of Dallinger. The group's research interests include internal representations of auditory and visual information - that is, how the external world is mapped onto internal representations in our mind. They needed some Dallinger improvements for their research projects, so they turned to Jazkarta and our unique expertise for help.

Our first project at Max Planck was to teach a workshop titled Massive Online Data Collection with Dallinger. Participants from the research group learned the nuts and bolts of using Dallinger to run their experiments, and two of Jazkarta's developers were able to see and discuss first hand these researchers' Dallinger experiments.

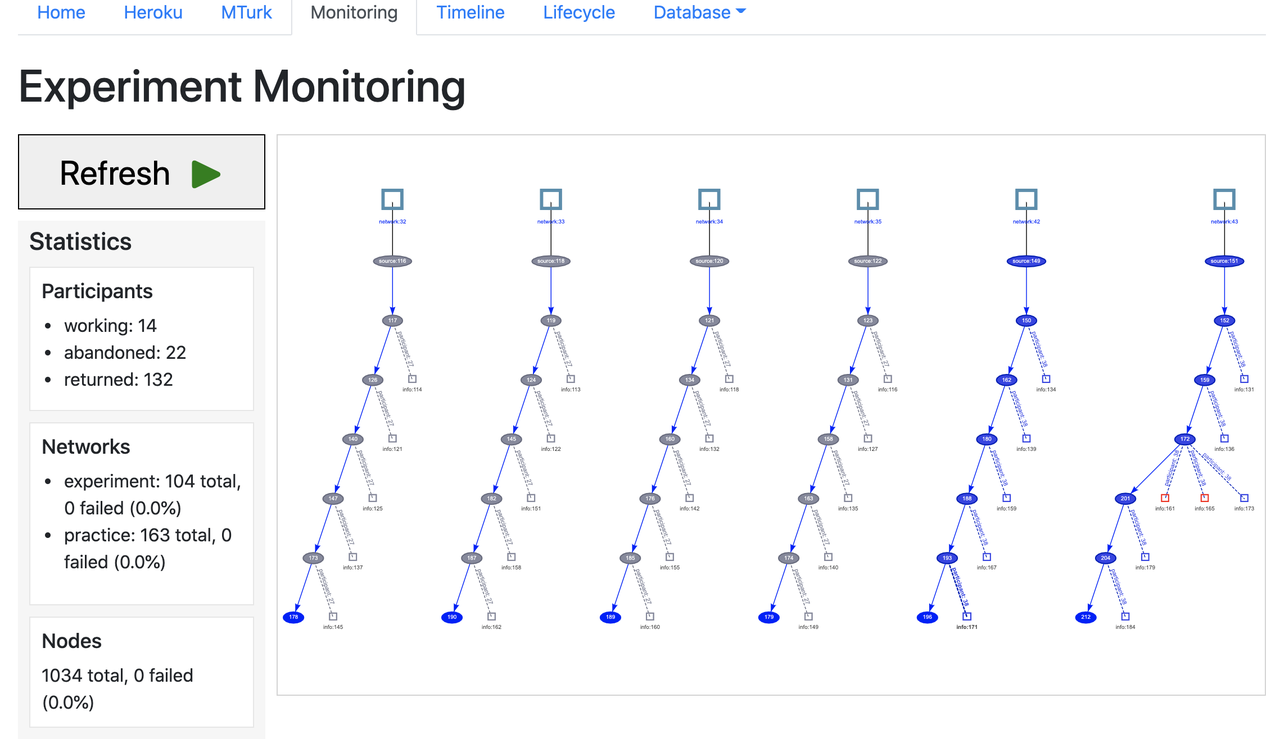

After the workshop, we turned our attention to a raft of Dallinger fixes and enhancements. The most notable new feature was a dashboard displaying information about a running experiment, including:

- Visual representations of the state of the experiment network

- Real-time views of the underlying database tables

- Mechanical Turk recruitment details (HITs assigned, completed, pending, etc)

- Information about the platform infrastructure, like Heroku and Redis data storage

We also added better tools for compensating participants, created a pytest plugin so authors can more easily write tests for their own experiments, and made it possible to continue an archived experiment with new participants. And perhaps most importantly, we vastly improved startup time for developers so building new experiments is faster and more fun.

We are proud of being part of a project which empowers social science researchers to be more productive.